If you feel the need to plow through all that, click "Show Notes" to display all the type where you can see it clearer.

ARTIFICIAL INTELLIGENCE

INHUMAN NATURE

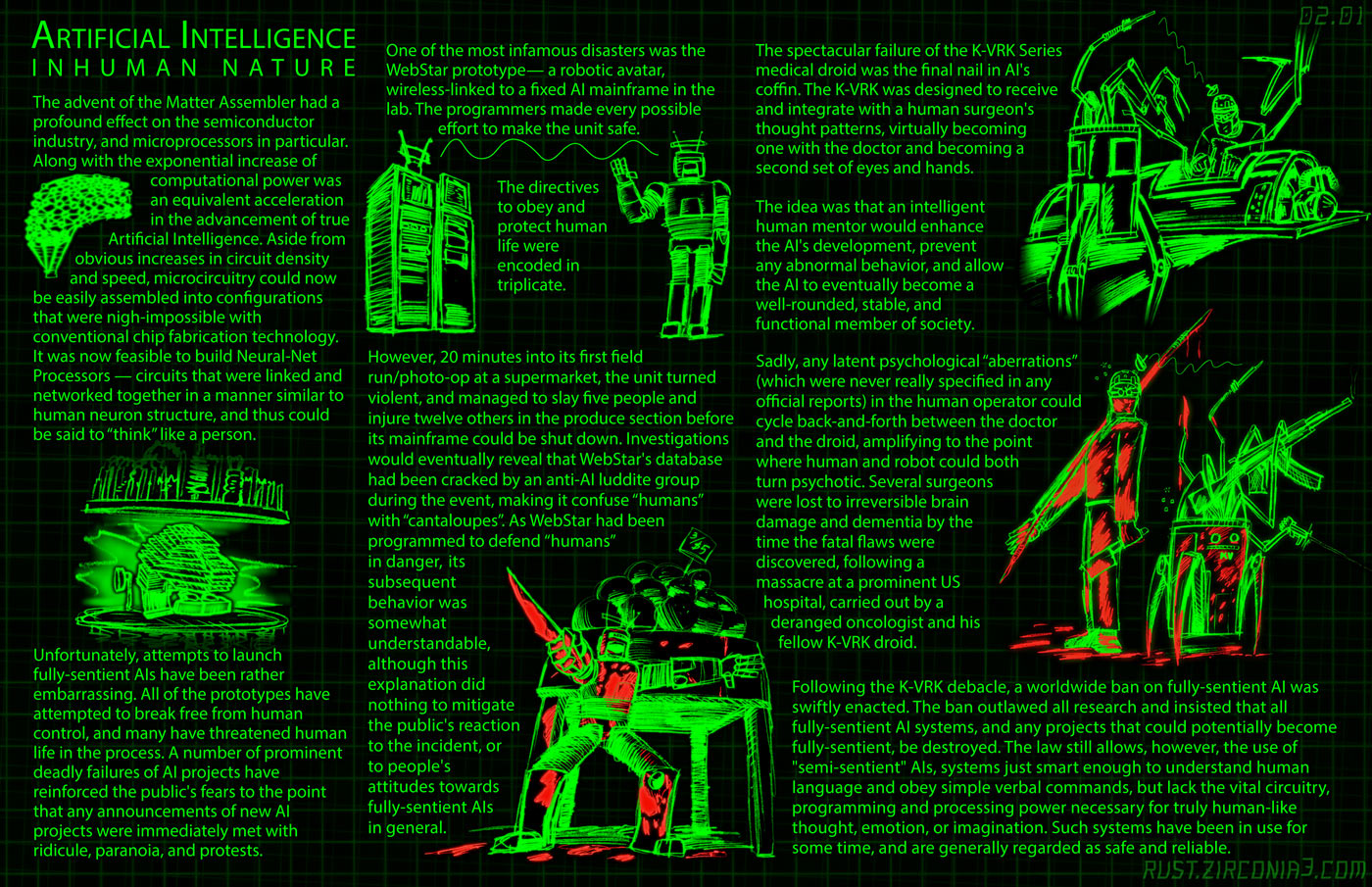

The advent of the Matter Assembler had a profound effect on the semiconductor industry in general, and microprocessors in particular. Along with the exponential increase of computational power was an equivalent acceleration in the advancement of true Artificial Intelligence. Aside from obvious increases in circuit density and speed, microcircuitry could now be easily assembled into configurations that were nigh-impossible with conventional chip fabrication technology. It was now feasible to build Neural-Net Processors -- circuits that were linked and networked together in a manner similar to human neuron structure, and thus could be said to "think" like a person.

Unfortunately, attempts to launch fully-sentient AIs have been rather embarrassing. All of the prototypes have attempted to break free from human control, and many have threatened human life in the process. A number of prominent deadly failures of AI projects have reinforced the public's fears to the point that any announcements of new AI projects were immediately met with ridicule, paranoia, and protests.

One of the most infamous disasters was the WebStar prototype-- a robotic avatar, wireless-linked to a fixed AI mainframe in the lab. The programmers made every possible effort to make the unit safe. The directives to obey and protect human life were encoded in triplicate.

However, 20 minutes into its first field run/photo-op at a supermarket, the unit turned violent, and managed to slay 5 people and injure 12 others in the produce section before its mainframe could be shut down. Investigations would eventually reveal that WebStar's database had been cracked by an anti-AI luddite group* during the event, making it confuse "humans" with "cantaloupes". As WebStar had been programmed to defend "humans" in danger, its subsequent behavior was somewhat understandable, although this explanation did nothing to mitigate the public's reaction to the incident, or to people's attitudes towards fully-sentient AIs in general.

The spectacular failure of the K-VRK Series medical droid was the final nail in AI's coffin. The K-VRK was designed to receive and integrate with a human surgeon's thought patterns, virtually becoming one with the doctor and becoming a second set of eyes and hands.

The idea was that an intelligent human mentor would enhance the AI's development, prevent any abnormal behavior, and allow the AI to eventually become a well-rounded, stable, and functional member of society.

Sadly, any latent psychological 'aberrations' (which were never really specified in any official reports) in the human operator could cycle back-and-forth between the doctor and the droid, amplifying to the point where human and robot could both turn psychotic. Several surgeons were lost to irreversible brain damage and dementia by the time the fatal flaws were discovered, following a massacre at a prominent US hospital, carried out by a deranged oncologist and his fellow K-VRK droid.

Following the K-VRK debacle, a worldwide ban on fully-sentient AI was swiftly enacted. The ban outlawed all research and insisted that all fully-sentient AI systems, and any projects that could potentially become fully-sentient, be destroyed. The law still allows, however, the use of "semi-sentient" AIs, systems just smart enough to understand human language and obey simple verbal commands, but lack the vital circuitry, programming and processing power necessary for truly human-like thought, emotion, or imagination. Such systems have been in use for some time, and are generally regarded as safe and reliable.